Infor M3 Enterprise Collaborator (MEC) now includes support for SSH FTP (SFTP), a secure file transfer protocol.

FTP vs. PGP vs. SFTP vs. FTPS

Plain FTP does not provide security properties such as confidentiality (against eavesdropping) and integrity (against tampering). FTP provides authentication, but it is plain text. As such, plain FTP is insecure and strongly discouraged.

Even coupled with PGP file encryption and signature verification to protect the contents of the file, the protocol, the credentials, the files and the folders are still vulnerable.

On the other hand, SFTP provides secure file transfer over an insecure network. SFTP is part of the SSH specification. This is what I will explore in this post.

There is also FTPS (also known as FTP-SSL and FTP Secure). Maybe I will explore that in another post.

MEC 9.x, 10.x, 11.4.2.0

If you have MEC 11.4.2.0 or earlier, your MEC does not have full built-in support for SFTP or FTPS. I found some traces in MEC 10.4.2.0, 11.4.1 and 11.4.2. And I was told that support for SFTP was made as a plugin sort of, in some MEC 9.x version; maybe they meant FTPS. Anyway, if you are handy, you can make it work. Or you can manually install any SFTP/FTPS software of your choice and connect it to MEC. Do not wait to secure your file transfers.

MEC 11.4.3.0

MEC 11.4.3.0 comes with built-in support for SFTP, and I found traces of FTPS. Unfortunately, it did not yet ship with documentation. I was told a writer is documenting it now. Anyway, we can figure it out by ourselves. Let’s try.

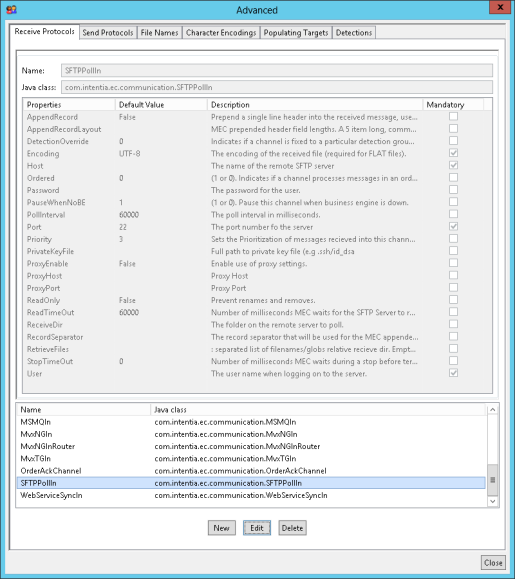

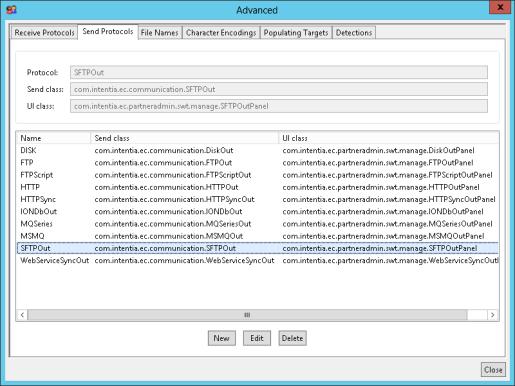

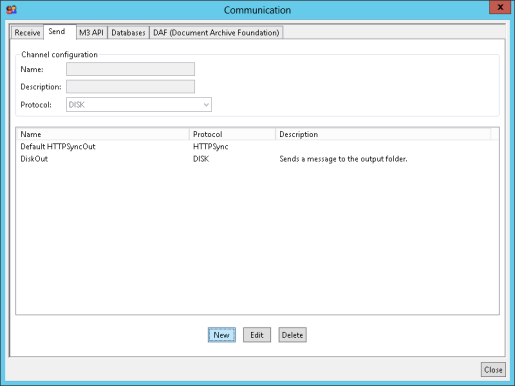

In Partner Admin > Managed > Advanced, there are two new SFTP channels, SFTPPollIn and SFTPOut, which are SFTP clients:

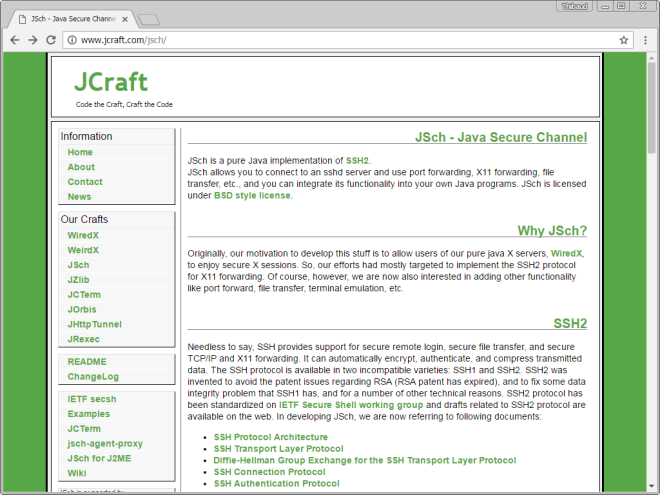

By looking deeper at the Java classes, we find JCraft JSch, a pure implementation of SSH2 in Java, and Apache Commons VFS2:

SFTPOut channel

In this post, I will explore the SFTPOut channel in MEC which is an SFTP client for MEC to exchange files with an existing SFTP server.

Unit tests

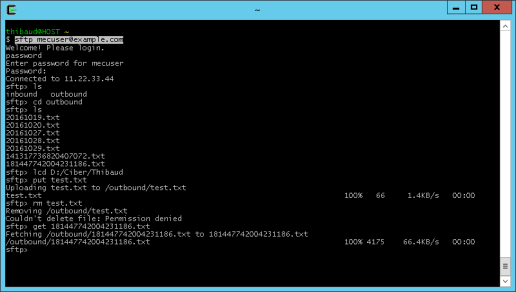

Prior to setting up MEC SFTPOut, we have to ensure our MEC host can connect to the SFTP server. In my case, I am connecting to example.com [11.22.33.44], on default port 22, with userid mecuser, and path /outbound. Contact the SFTP server administrator to get the values, and eventually contact the networking team to adjust firewall rules, name servers, etc.

Do the basic networking tests (ICMP ping, DNS resolution, TCP port, etc.):

Then, do an SSH test (in my example I use the OpenSSH client of Cygwin). As usual with SSH TOFU, verify the fingerprint on a side channel (e.g. via secure email, or via phone call to the administrator of the SFTP server assuming we already know their voice):

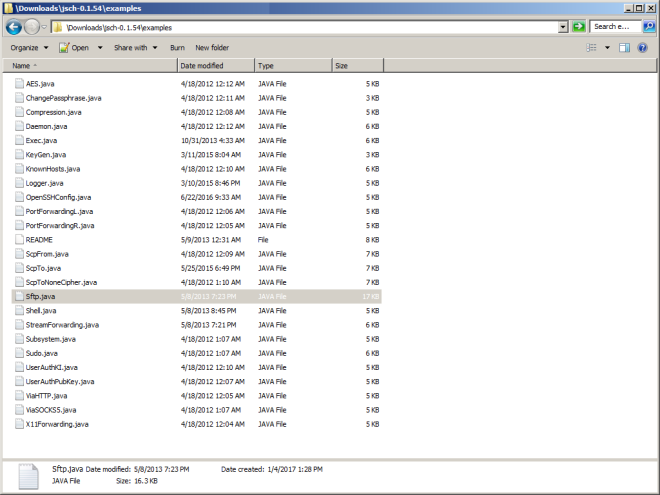

Optionally, compile and execute the Sftp.java example of JSch. For that, download Ant, set JAVA_HOME and ANT_HOME in build.bat, set the user@host in Sftp.java, and execute this:

build.bat

javac -cp build examples\Sftp.java

java -cp build;examples Sftp

Those tests confirm our MEC host can successfully connect to the SFTP server, authenticate, and exchange files (in my case I have permissions to put and retrieve files, not to remove files).

Now, we are ready to do the same SFTP in MEC.

Partner Admin

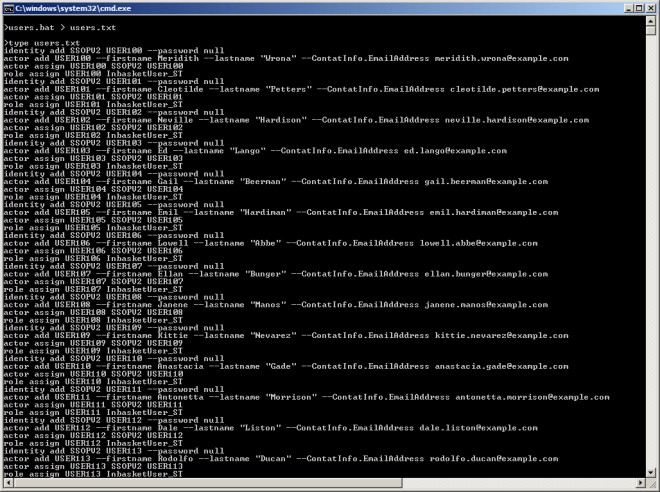

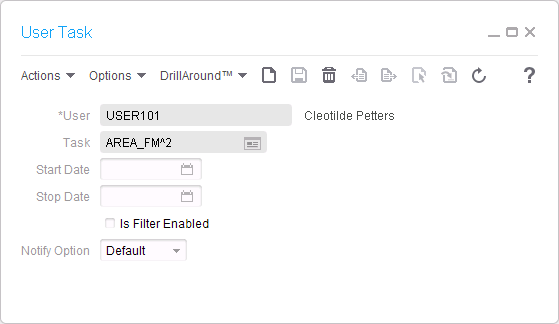

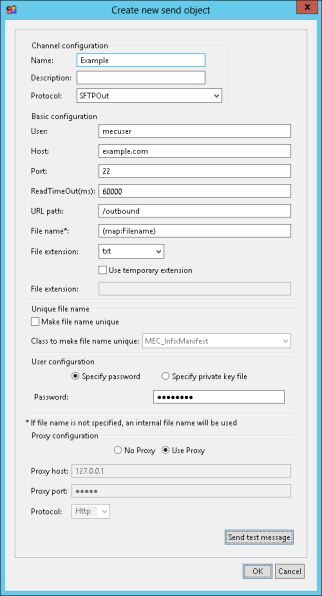

In Partner Admin > Manage > Communication, create a new Send channel with protocol SFTPOut, hostname, port, userid, password, path, filename, extension, and other settings:

I have not yet played with all the file name options.

The option for private key file is for key-based client authentication (instead of password-based client authentication). For that, generate a public/private RSA key pair, for example with ssh-keygen, and send the public key to the SFTP server administrator, and keep the private key for MEC.

The button Send test message will send a file of our choice to that host/path:

The proxy settings are useful for troubleshooting.

A network trace in Wireshark confirms it is SSH:

Now, we are ready to use the channel as we would use any other channel in MEC.

Problems

- The SFTPOut channel does not allow us to verify the key fingerprint it receives from the SFTP server. Depending on your threat model, this is a security vulnerability.

- There is a lack of documentation (they are working on it)

- At first, I could not get the Send test message to work because of the unique file name (I am not familiar with the options) and the jzlib JAR file (see below).

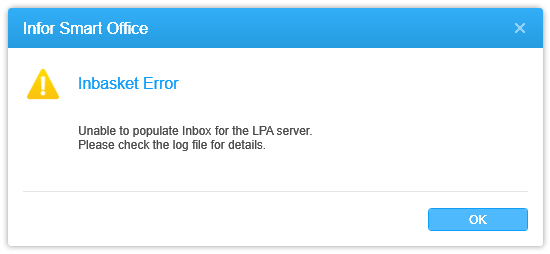

- MEC is missing JAR file jzlib, and I got this Java stacktrace:

com.jcraft.jsch.JSchException: java.lang.NoClassDefFoundError: com/jcraft/jzlib/ZStream

at com.jcraft.jsch.Session.initDeflater(Session.java:2219)

at com.jcraft.jsch.Session.updateKeys(Session.java:1188)

at com.jcraft.jsch.Session.receive_newkeys(Session.java:1080)

at com.jcraft.jsch.Session.connect(Session.java:360)

at com.jcraft.jsch.Session.connect(Session.java:183)

at com.intentia.ec.communication.SFTPHandler.jsch(SFTPHandler.java:238)

at com.intentia.ec.communication.SFTPOut.send(SFTPOut.java:66)

at com.intentia.ec.partneradmin.swt.manage.SFTPOutPanel.widgetSelected(SFTPOutPanel.java:414)

I was told it should be resolved in the latest Infor CCSS fix. Meanwhile, download the JAR file from JCraft JZlib, copy/paste it to the following folders, and restart MEC Grid application and Partner Admin:

MecMapGen\lib\

MecServer\lib\

Partner Admin\classes\

- Passwords are stored in clear text in the database, that is a security vulnerability yikes!

SELECT PropValue FROM PR_Basic_Property WHERE PropKey='Password'. I was told it should be fixed in the Infor Cloud branch, and is scheduled to be merged back. - With the proxy (Fiddler in my case), I was only able to intercept a CONNECT request, nothing else; I do not know if that is the intention.

- In one of our customer environments, the SFTPOut panel threw:

java.lang.ClassNotFoundException: com.intentia.ec.partneradmin.swt.manage.SFTPOutPanel

Future work

When I have time, I would like to:

- Try the SFTPPollIn channel, it is an SFTP client that polls an existing SFTP server at a certain time interval

- Try the private key-based authentication

- Try SFTP through the proxy

- Try FTPS

- Keep an eye for the three fixes (documentation, jzlib JAR file, and password protection)

Conclusion

This was an introduction about MEC’s support for SFTP, and how to setup the SFTPOut channel for MEC to act as an SFTP client and securely exchange files with an existing SFTP server. There is more to explore in future posts.

Please like, comment, subscribe, share, author. Thank you.

UPDATES 2017-01-13

- Corrected definition of SFTPPollIn (it is not an SFTP server as I had incorrectly said)

- Added security vulnerability about lack of key fingerprint verification in MEC SFTPOut channel

- Emphasized the security vulnerability of the passwords in clear text